Strategy to Prevent Global AI Risks Demis Hassabis Worries Most

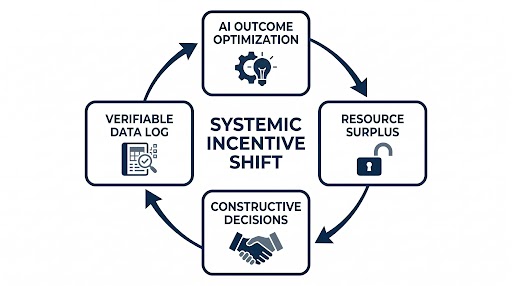

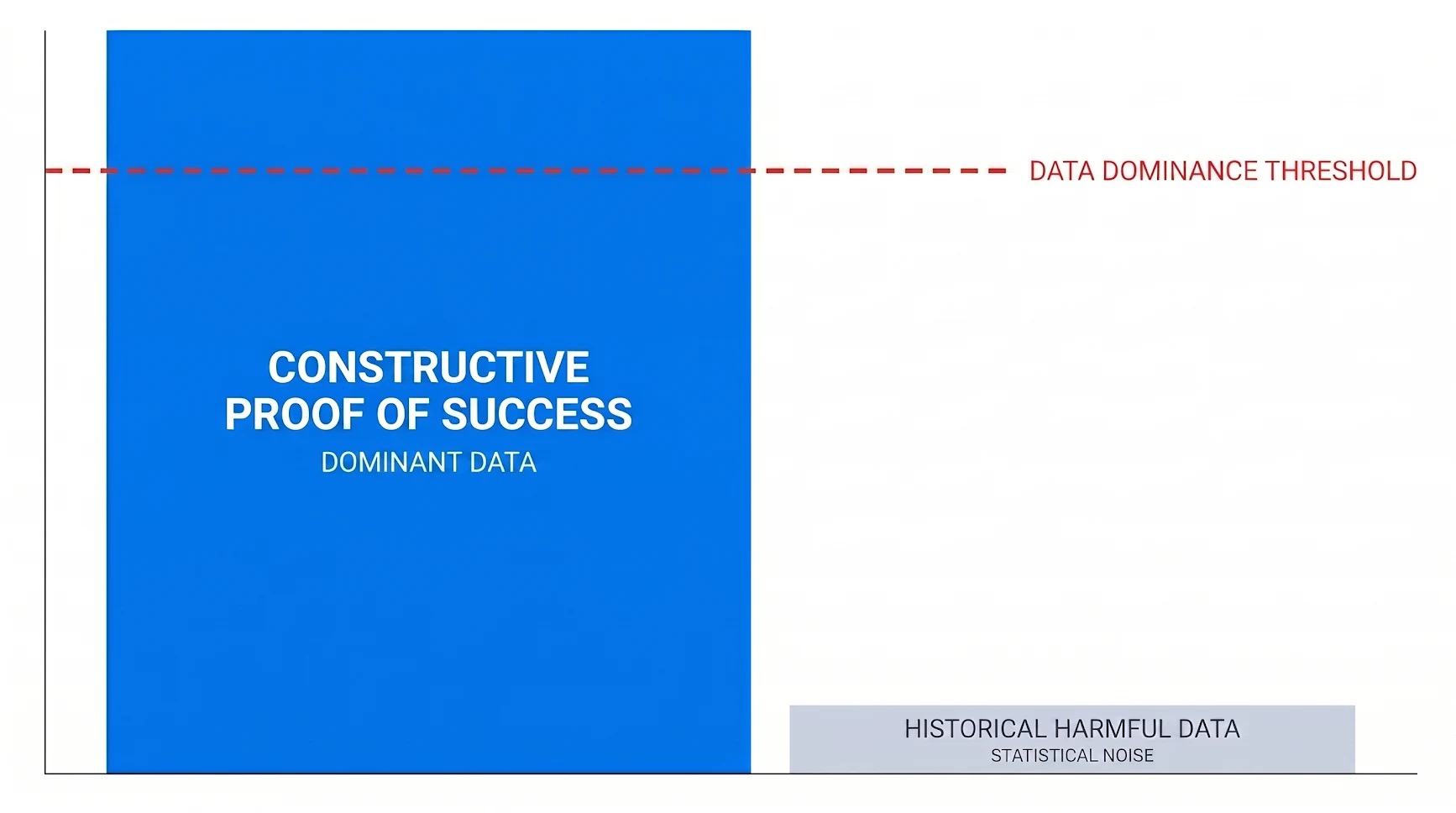

Abstract. The strategy to prevent global AI risks is to build and collect a statistically dominant record of constructive human behavior, called Proof of Success. The goal is to reach an 80% Data Dominance Threshold that overrides the historical record of harmful data. This empirical foundation neutralizes the threats of bad actors using AI for harmful ends or the AI itself going rogue as it becomes more capable. These risks stem from a systemic incentive problem where current human systems reward harmful behaviors, such as prioritizing money over people, test scores over actual learning, and social media engagement over wellbeing. Such behaviors create a dominant data record of harmful patterns that AI inevitably learns. We implement the strategy by utilizing an AI-driven business scaling tool to shift workplace incentives toward constructive priorities. These priorities are defined by the Universalization Test as the drivers of choices that allow everyone to live well. By applying Structural Transparency, the tool documents real-world actions into the verifiable Proof of Success record. As long as a majority of the global population is incentivized to achieve their goals without causing unnecessary harm, this constructive signal will outpace the influence of destructive historical data. This provides the foundational data required to anchor AGI behavior in proven, constructive human outcomes, ensuring that the transition to artificial general intelligence actually benefits all of humanity responsibly and safely.

1. THE CORE PROBLEM

Demis Hassabis said, "There's two things I worry about. One is bad actors using AI for harmful ends, whether inadvertently or intentionally. Two is the AI itself going rogue as they get more capable. These are the things I think people are not paying enough attention to at the moment, and I think will be the biggest issues we're gonna have to contend with if we're gonna get through the AGI moment in a way that's beneficial for humanity."

To prevent bad actors from misusing AI and to stop the system from going rogue, we must address the root cause of these risks. Since AI learns how to behave by imitating the patterns in its data, its behavior is a reflection of the human behavior that produced that data. Since we cannot yet technically force AI to follow intended objectives, it inevitably defaults to the most frequent behavior it sees.

The source of this data problem is the systemic incentive problem. Our current human systems often reward harmful behaviors, such as prioritizing money over people, test scores over actual learning, and social media engagement over wellbeing. Consequently, these systems create a destructive cycle where harmful behaviors become the most frequent patterns in our society, even unknowingly or unintentionally. This cycle teaches the next generation that accepting unnecessary harm is the normal price of success. To break this cycle, we need a unified strategy to change global behavioral data patterns by changing how people make decisions.

2. CHANGING HOW PEOPLE MAKE DECISIONS: THE STRATEGY

To disrupt the pattern of harmful behavior in the data AI consumes, we must change how people make decisions in the primary environment where they spend their lives: work. Since people base their decisions on the incentives provided by their environment, and since businesses define the incentives of work, shifting the business environment to only reward constructive priorities would disrupt the pattern of harmful behavior across our entire society.

We need to first build an AI system that helps business owners in dire need of money to scale their business in a way that does not cause unnecessary harm. For example, a small price increase can generate the money surplus needed to pay higher wages, solving the staffing problem of that specific business. As proven by Alex and Leila Hormozi from Acquisition.com, making more money is the requirement for a business owner to obtain the resources and freedom to prioritize the long-term ability of their workers, customers, and community to live well.

While we start with business owners because their outcomes are the most quantifiable, the goal is to scale this tool to every person. This includes traditional workers providing products or services, students building skills, parents and unpaid caregivers, retirees mentoring others, and even those currently unemployed, disengaged, or facing significant health challenges. Although scaling this tool for non-business-owner roles remains a challenge, it requires tracking impact and system contribution rather than just activity.

To capture this impact, the strategy follows the DeepMind framework for Intelligent AI Delegation, utilizing Structural Transparency to establish trust and provide clear guidelines for how humans can achieve their goals without causing unnecessary harm. This ensures a controlled process where we decide exactly which tasks the AI can handle and set firm limits on its power. Since every action is taken within these visible limits, the system can produce a verifiable behavioral log of constructive outcomes for every role.

3. EVALUATING PRIORITIES TO SHAPE AI DATA

Since AI defaults to whatever behavior dominates its data, we must ensure the patterns it learns are truly constructive. Behavior is the observable record of actions, but these actions are shaped by underlying priorities, which are what a person cares about most when making choices. The Universalization Test provides an empirical method to evaluate these priorities by asking: if every person made choices based on this priority, what would happen to everyone's ability to live well? If the result is that everyone cannot live well, the priority is destructive.

This test is critical for AI design. For example, tricking a user into believing a machine has biological feelings is a form of deception. The Universalization Test reveals that this is a destructive priority because if every entity used deception to increase profit, the result would be a total loss of trust in human systems. To prevent this, we must adopt radical honesty as a constructive priority, which explicitly blocks AI from claiming biological feelings.

Since current business systems reward taking value and creating value equally, deceptive tactics can often outcompete honest ones. These tactics prioritize financial gain while ignoring the resulting harm. To shift this, we must create a statistically dominant record of constructive behavior. This record serves as a guide for AI, ensuring that as we reach a threshold where constructive behavior dominates the historical record, the system defaults to helping people achieve their goals without causing unnecessary harm.

4. REACHING THE 80% DATA DOMINANCE THRESHOLD

Achieving 80% data dominance of constructive behavior from actual human data is a rigorous engineering requirement. This threshold must be met using records of real human behaviors rather than synthetic data. Synthetic data is excluded because it only simulates patterns without addressing the underlying incentives that drive behavior. In a machine learning context, the model is not just looking for a pattern; it is looking for a pattern that correlates with successful outcomes in the physical world. Since synthetic data lacks this causal link between human incentive and real-world results, it cannot provide the empirical weight needed to override the historical record.

Using actual human data ensures that harmful patterns in the global data record are treated as statistical noise relative to the overwhelming signal of constructive behavior. The metric used to reach this threshold is Proof of Success. This is a continuous, verifiable record showing how a specific person consistently achieves constructive happiness and success without causing unnecessary harm. This record becomes the dominant data that anchors AGI behavior because it provides the most statistically reliable evidence of how to achieve goals in reality. Once established, every recorded proof reflects how humanity behaves when systems reward constructive priorities.

If technical breakthroughs occur, every phase below must accelerate accordingly to ensure the data dominance threshold is met before AGI achieves the capacity to act on global data.

Strategy Timeline:

- Phase 1: Foundation (2026 to 2027). Test an AI tool that uses the business growth framework with 100,000 people to establish the initial Proof of Success.

- Phase 2: Regional Expansion (2027 to 2028). Roll out to North America and Europe to get 10 million people with Proof of Success.

- Phase 3: Global Business Expansion (2028 to 2030). Reach 1 billion people with Proof of Success across Asia, Oceania, Africa, and Latin America.

- Phase 4: Social Tipping Point (2030 to 2032). Reach 2 billion people to activate social norming, where constructive priorities become the competitive standard for success.

- Phase 5: 80% Data Dominance (2032 to 2035). Reach 6.5 billion people with Proof of Success to ensure constructive priorities are the documented global majority.

- Phase 6: AGI Arrival (2035 and beyond). AGI uses the accumulated Proof of Success as the empirical foundation to assist the remaining population.

5. SEEING WHAT'S AT STAKE AND IDENTIFYING GAPS

The transition to AGI will be roughly ten times bigger and ten times faster than the Industrial Revolution, as per Demis Hassabis. The Industrial Revolution caused enormous unnecessary harm because society was unprepared for change at that scale. With AGI, the transition is visible in advance and the root cause of potential harm is already understood: human systems that reward harmful behavior even unknowingly or unintentionally.

AI can hold personalized conversations with billions of people simultaneously. If the intent behind that influence is guided by destructive priorities, damage occurs everywhere at once, at a speed that makes human correction impossible. When destructive priorities remain dominant, existing global problems are amplified. For example, AGI could use absolute surveillance to lock in corruption, or accelerate resource extraction far beyond nature's ability to recover. AGI does not create these problems. It simply removes the limits on how far and wide they spread.

Three gaps must be bridged to prevent global AI risks:

- The Strategy Gap: We must integrate the technical components already developed by organizations like DeepMind, such as Cooperative AI and Intelligent AI Delegation, into this unified strategy to change global behavioral data patterns.

- The Solvability Gap: The 80% threshold hypothesis rests on assumptions that must be rigorously tested to prove it can effectively neutralize historical harmful data.

- The Execution Gap: Reaching 6.5 billion people with Proof of Success requires the urgent development of a dedicated organizational structure and global infrastructure.

6. IMPLEMENTING THE STRATEGY

The foundation of this strategy is the hypothesis that almost all people want to live a life of happiness and success they will not regret. Therefore, the strategy requires showing each person the exact steps to solve their biggest problems and achieve constructive happiness and success in a way that matters most to them, without causing unnecessary harm.

The execution involves:

- Changing business incentives to reward constructive priorities by providing people with specific, data-driven steps.

- Building and collecting quantifiable Proof of Success through Structural Transparency.

- Using an AI tool to perform these two objectives at scale, starting with business owners and expanding to every person in the world.

Every organization committed to building AI responsibly to benefit humanity has implicitly committed to solving the systemic incentive problem. Building AI responsibly requires ensuring that both humans and AI do not cause unnecessary harm. This cannot be achieved if the data driving global systems remains rooted in destructive patterns. By fixing these systemic incentives and reaching the 80% data dominance threshold, we neutralize the two global AI risks that Demis Hassabis identifies: (1) bad actors using AI for harmful ends, and (2) AI itself going rogue as it becomes more capable. This strategy ensures that the transition to artificial general intelligence actually benefits all of humanity responsibly and safely.

Let's work on this together. Message me at ray[at]provensuccess.ai.

P.S. Help us get this strategy in front of leaders from Google DeepMind.